The multithreading paradigm is an essential aspect of effective application execution and resource utilization. Threads enable an operating system to run several tasks cooperatively in a single process and hence enhance program performance and responsiveness. This article discusses the thread concept, components of threads, types, benefits, as well as operating system threading problems.

What is a Thread in Operating System?

A thread is viewed as the smallest execution unit in processes. A process may have either a single or multiple threads. One thread executes independently as part of the program. All threads in a process have common access to valuable resources such as memory space and open files, but they all have private stacks, program counters, and registers. Threads are also referred to as lightweight processes based on independent runtime and resource sharing.

Components of Thread

There are three components of thread in the operating system:

- Program Counter: This one tracks the address of the next instruction to be executed for that thread.

- Register Set: Each thread has its own set of registers, which temporarily store intermediate results during execution.

- Stack Space: Each thread has its own stack, "retained" for storing the function call, local variables, and return addresses.

Why Do We Need Threads?

Threads provide several advantages in operating systems and programming, including:

- Lower resource consumption: A thread shares the same memory and resources used by other threads, making it more memory efficient.

- Increased speed of creation and deletion: Threads are created and terminated much faster than processes.

- Less context switching time: The context switch time between threads is less than between processes; hence, the performance greatly improves.

- Parallelism: For a single process, multiple threads can run in parallel; this simultaneously allows an application to do many things.

Types of Threads

The threads can be divided into two categories:

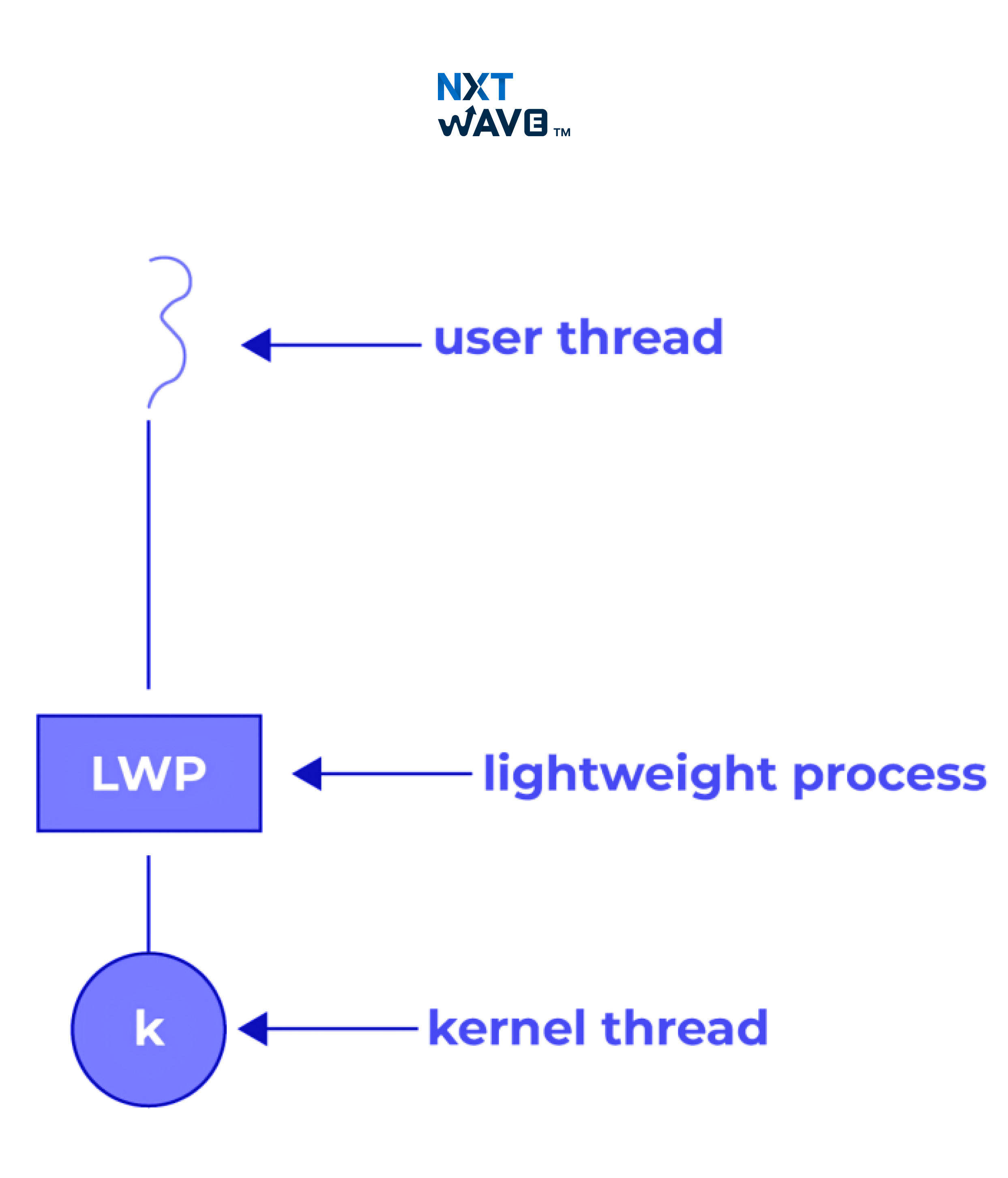

1. User-Level Thread:

- These threads run on a user level (or a user-level library), which the kernel/OS usually does not know.

- Fast to create and switch since this does not use kernel intervention.

- But if one user-level thread is blocked, its entire process gets blocked.

- Example: POSIX threads, Java threads.

2. Kernel-Level Thread:

- The kernel manages these threads; like switches, the kernel might schedule these threads.

- They are slow in the creation and switching due to kernel involvement.

- In this situation, even if one kernel-level thread is blocked, other threads in the same process can still function.

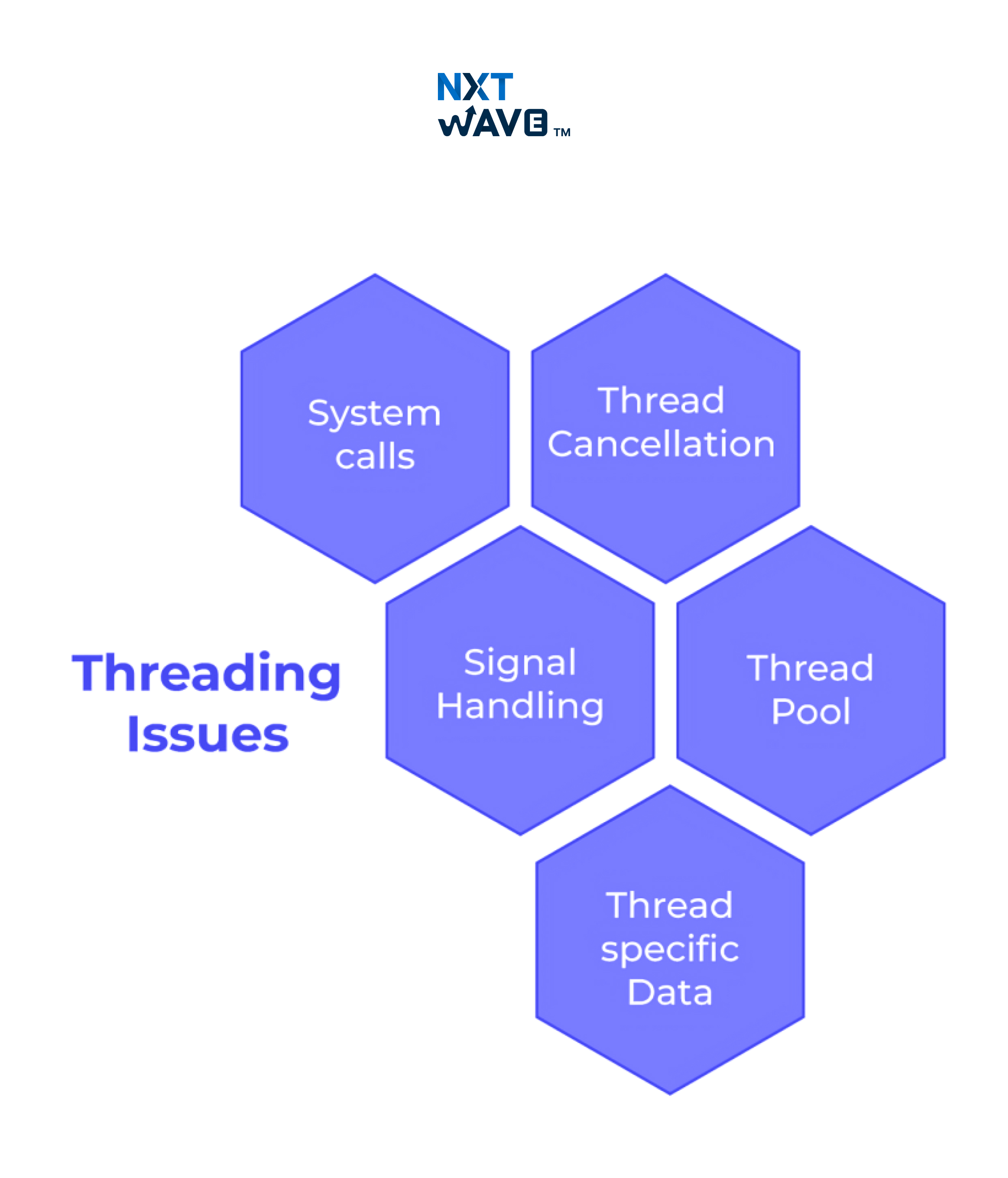

Issues with Threading

There are several issues with threading such as:

1. System Calls

In many Unix-like operating systems, two basic system calls are defined for process creation and management:

- fork(): It creates a copy of the calling process and identifies the newly created process as a distinct process or a thread, depending on the OS design.

- exec(): It replaces the image of an already running process with an entirely new one to run a different program. This call could also interact with threads, affecting the entire process and its threads.

2. Thread Cancellation

It expresses the case where a thread is terminated before completing a given task, with the following being significant forms:

- Asynchronous Cancellation: The target thread is terminated as soon as possible, which can be dangerous since it can lead to inconsistent resources.

- Deferred Cancellation: Involves the thread checking from time to time whether it is supposed to terminate and terminate accordingly, ensuring that cleanup of resources is performed.

3. Signal Handling

In Unix-based systems, signals notify a process of particular events. They can be divided into two groups:

- Asynchronous Signals: Sent externally to the process.

- Synchronous Signals: Generated and sent internally using the same process.

Threaded applications must manage such signals so that a signal directed to a thread does not interfere or is delivered to another thread.

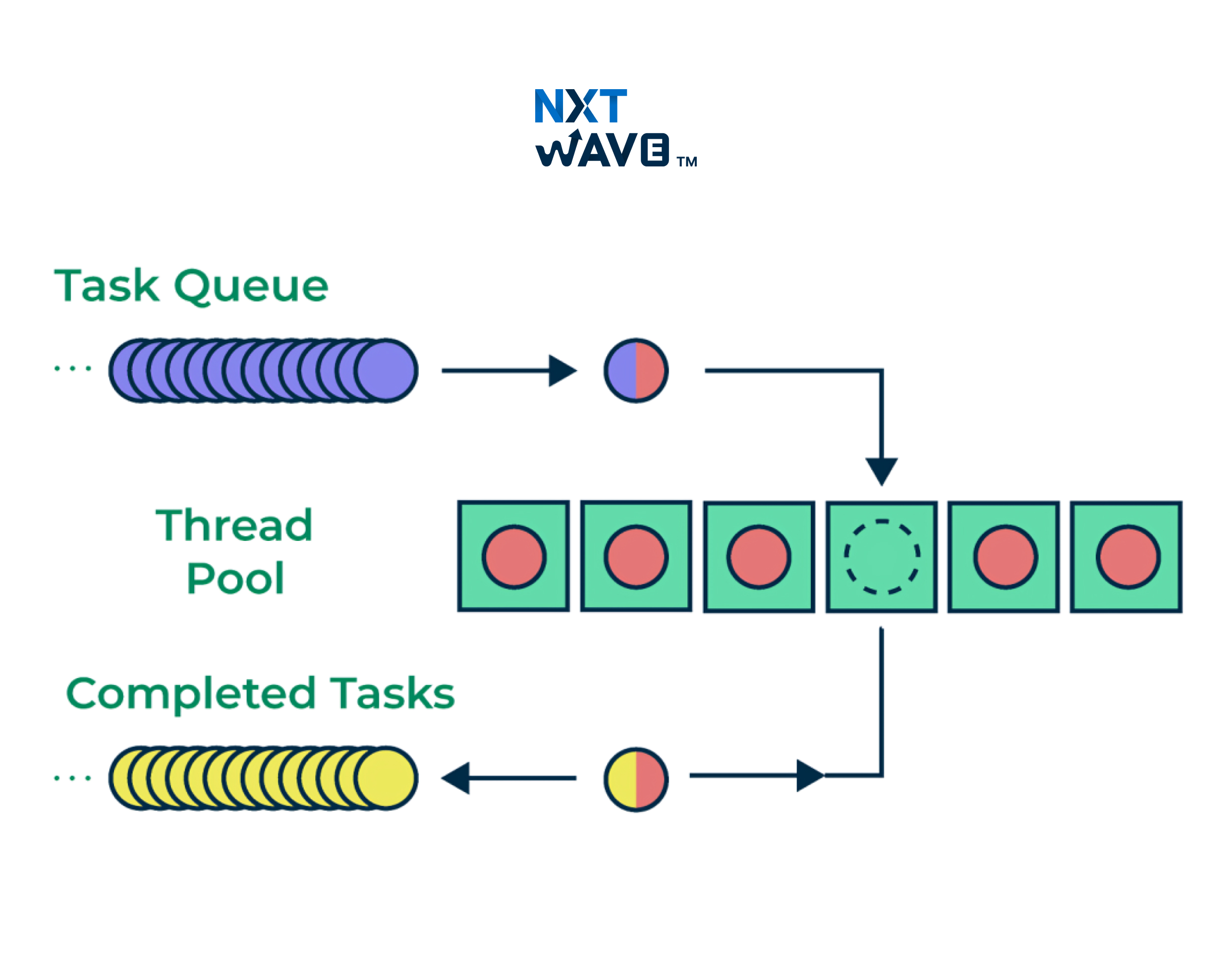

4. Thread Pool

A thread pool refers to successfully predetermined threads used to execute any incoming tasks. This process makes creating a new thread for each task unnecessary because the threads are simply picked up from the pool whenever required. Thread pools are especially useful when tasks are frequent because the thread creation and destruction would incur a considerable cost.

5. Thread-Specific Data

In multithreading, thread-specific data denotes data that are unique to each thread. This allows the independent operation of each thread without disturbing others, even if they share the same process. Thread-specific data is found in systems where separate threads carry out different tasks or operations that need isolated data (for instance, transaction IDs in a financial application).

What is Process Synchronization?

Process Synchronization refers to the mechanisms that ensure multiple processes or threads can safely concurrently access shared resources without conflict. This is particularly significant in a multithreading environment in the case of a race condition which results in the timing of threads' access to shared data.

What is Race Condition?

Race condition arises when two or more threads access the shared data concurrently, and the result depends on when each thread executes. The behaviour could be inconsistent and could provide wrong results. Race conditions are generally smothered via synchronisation mechanisms like mutexes and semaphores.

What is Mutex?

A mutex is a synchronisation primitive that provides access to a resource in mutual exclusion. To prevent a race condition, it is ensured that only one thread will own the critical section while delivering the desired access to the resource. Whenever a thread accesses a resource, it must "lock" the mutex and "unlock" it when finished, allowing other threads to access the resource.

What is Deadlock?

In the simplest terms, a deadlock is when two or more threads are blocked forever, waiting for another to release a resource. Deadlocks are a serious issue in multithreaded programming because they usually can lock up applications. Deadlock prevention strategies involve several techniques, including ensuring resource acquisitions are done in a specific order and implementing timeouts.

Difference Between Process and Thread

Here are the differences between process and thread such as:

| Process |

Thread |

| A process is an independent program in operation. |

A thread is the smallest unit of execution within a process. |

| Each process has its own resources (memory, CPU, etc.) |

Threads share resources (memory, code) within the same process. |

| Slower to create and terminate due to the allocation of resources. |

Faster to create and terminate due to the sharing of resources. |

| Each process has its own memory space. |

There is a shared memory space between threads within a process. |

| Communication between processes is slower and requires IPC (Inter-Process Communication). |

Communication between threads is faster because they share the same memory space. |

| Processes are independent of one another. |

Threads are interdependent and capable of accessing one another's data. |

| Failures of one process do not impact other processes. |

When a thread fails, it may affect several threads within the same process. |

| For example, opening two applications (such as two web browsers). |

For example, it opens two tabs in the same browser. |

Advantages of Threading in Operating Systems

Here are the advantages of threading in the operating system:

- Increased performance: Multiple threads can be executed concurrently within a process, thus improving the system's throughput.

- Responsiveness: When used on applications, multiple threads will be more responsive since some do I/O or computation while others are interactive.

- More efficient use of resources: Threads consume fewer resources since they share memory.

- Fast context switching: Threads enable faster context switching in a multitasking environment since they are lighter than processes.

Conclusion

In conclusion, threads in an operating system form a vital feature to execute various operations concurrently within a process for better performance with resource efficiency. Such benefits include faster context-switching, less resource consumption, etc., whereas the challenges include race conditions, deadlocks, and problems with synchronisation. To create efficient multithreaded applications, properly managing thread pools, mutexes, and thread-specific data is critical in building usable and stable applications. The ability to manage the complexity of multithreading allows applications to operate using the resources offered by modern operating systems and hardware.

Gain Industry-Relevant Skills Before Graduation for Your Tech Career!

Explore ProgramFrequently Asked Questions

1. What is Multithreading in an Operating System?

Multithreading allows several threads to be executed concurrently by a single process, enhancing performance by allowing parallel tasks. It improves responsiveness and resource utilisation through the work into smaller concurrent threads.

2. What are the disadvantages of thread in os?

The disadvantages of threads in os include added complexity, overhead for synchronisation, and the possibility of deadlocks. Managing too many threads can waste resources, and debugging becomes harder due to non-deterministic behaviour, making multithreading very hard to maintain.

3. What is the issue with multithreaded programming?

The issues with multithreaded programming include problems with race conditions, deadlocks, and other synchronisation issues. Multithreading increases complexity, resource consumption, and debugging difficulty, leading to problems in managing and maintaining efficient code.

.avif)