Operating systems introduce parallel operation systems to fulfil the increasing demands for fast and efficient computation brought about by advancements in areas like multicore processors and distributed systems. Traditional operating systems were single-threaded and found it hard to deal with modern applications' increasing complexities and scales. Parallel operating systems allow several tasks to work in parallel on several processors, enhancing performance, resource utilisation, and efficiency at the system level. This is ideal for situations like scientific simulations, large-scale data processing, and real-time systems in which speed and accuracy are essential. The article will explain the working of parallel operating systems and discuss the various types, applications, advantages, and disadvantages.

What is a Parallel Operating System?

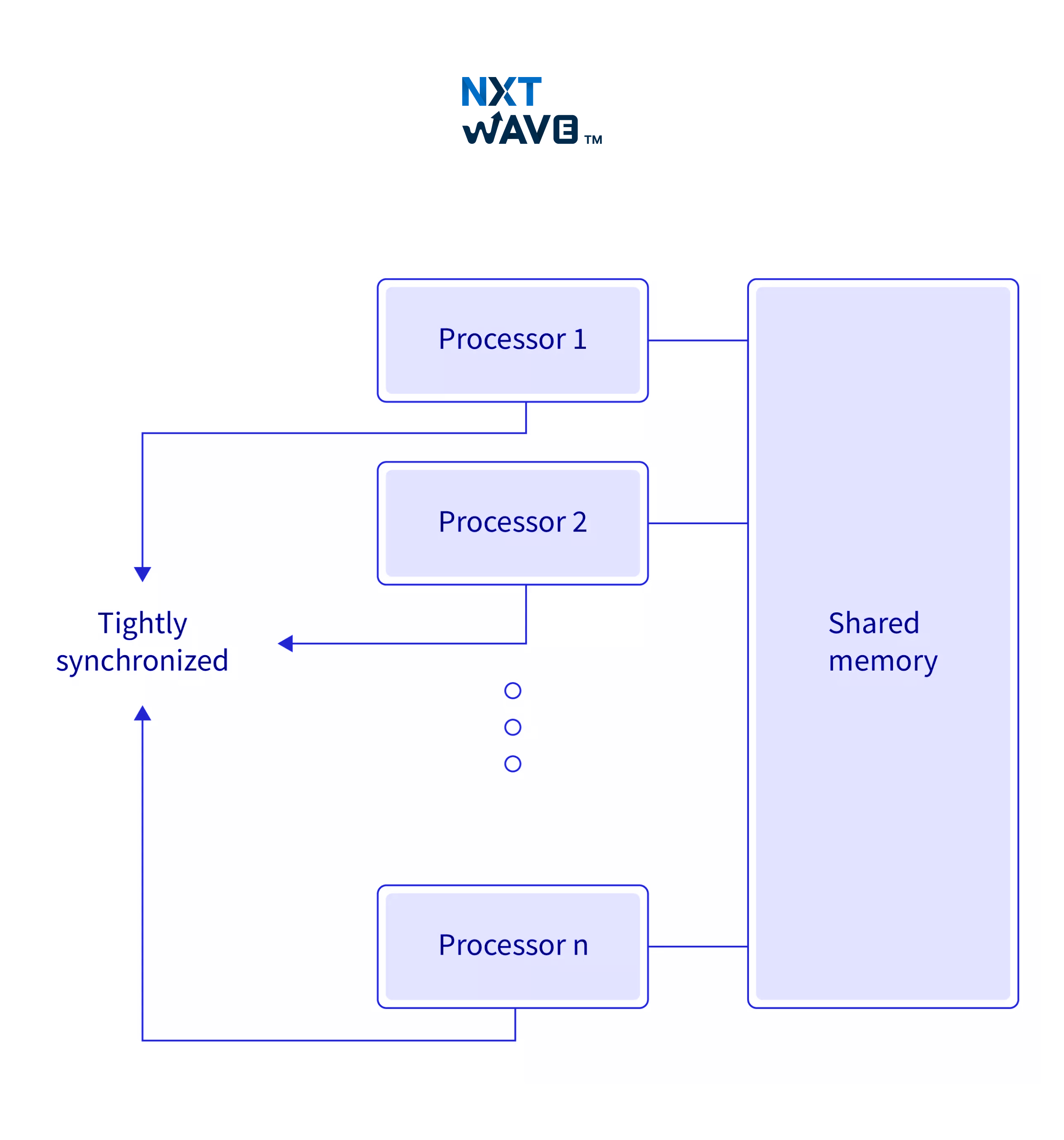

A Parallel Operating System is defined as an operating system that controls and coordinates the operations of several processors or computers that function together to perform simultaneous computation. With parallel operating systems, programs are broken into smaller pieces (tasks) that can be executed simultaneously on separate processors or computers (cluster-connected). The system provides help in environments involving multiple processors in one machine or subsequent machines in separate clusters.

How do Parallel Operating Systems Work?

Parallel operating systems partition computational tasks into small sub-tasks that must be run simultaneously by assigning these smaller tasks to separate processors. Since an operation can take place on parallel I/O, compared to serial processing (traditional one-at-a-time for each task), the processing and communication of Einstein's theory can be much faster. All the processors connect with each other for the purpose of synchronisation and coordination of tasks, providing for better resource utilisation.

Parallel systems can perform multiple I/O operations at once, thus increasing throughput and reducing project completion time, unlike serial processing. Parallel OS enables speed-ups of time-consuming operations by taking advantage of multi-core processors or clusters of machines, compared to the single-core conventional ones.

Types of Parallel Operating Systems

Here are the types of parallel operating systems such as:

Type-1: Native or Bare-metal

- A parallel OS of this type acts like a hypervisor in its native form, running directly over the physical hardware without relying on the host OS.

- This does not require I/O emulation because it communicates directly with the hardware.

- An example is the VMware ESXi, a Type-1 hypervisor that executes multiple virtual machines directly on the physical hardware.

Type-2: Hosted

- This OS is a hosted hypervisor over an existing OS like Linux or Windows.

- It provides a standard OS as a host for virtual machines or environments.

- An example is the VMware Workstation or Oracle VirtualBox on top of a host OS like Windows or Linux.

Applications of Parallel Operating Systems

Parallel operating systems are used across many areas needing intensive computing and fast execution of complex tasks. Some of the applications are:

- Databases and data mining: Provides relatively high speed in processing vast amounts of data in big data management.

- Advanced graphics and gaming: Useful for real-time creation of high-quality game graphics.

- Augmented reality (AR): Serves as an interface for processing multiple visual streams in real-time AR applications.

- Real-time system simulators: Performs simulation of complex systems in engineering and in physics.

- Scientific and Engineering: Perform extensive simulations for scientific research or engineering applications which usually require immense power for computation.

Examples of Parallel Operating Systems

Several parallel operating systems are available for some purposes. Some examples are:

- VMware: A type-1 hypervisor that brings up questions about creating and running virtual machines.

- Microsoft Hyper-V: A type-1 hypervisor utilised for creating virtual environments.

- Red Hat Enterprise Linux: A Linux-based OS capable of running any parallel application with parallel computing.

- Oracle VM: Assists in running multiple virtual machines within one host.

- KVM/QEMU: A Linux-based hypervisor used to create virtual machines on a single system.

- Sun xVM Server: Virtualization technology from Oracle.

These systems allow numerous virtual machines with their applications to operate simultaneously: the CPU and RAM become shared resources while not bothering other machines with their operation, thus increasing performance and efficient use of resources.

Functions of Parallel Operating Systems

Parallel operating systems carry out several vital functions to efficiently manage parallel computing systems:

- An environment of multiprocessing that allows for the concurrent execution of tasks across multiple processors.

- Process security protects data integrity by ensuring that one method does not affect the other, thereby preventing unauthorised access.

- Task load management distributes duties among the processors in such a manner that efficiency is ensured by balancing the load.

- Process isolation ensures that different processes in the system will not interfere with each other and run correctly.

- The best usage of resources ensures that the maximum performance from the invested hardware resources is achieved.

Advantages of Parallel Operating Systems

Parallel operating systems are very beneficial since they are used mostly in high-processing and scalable environments:

- Execution time: The simultaneous execution leads to a significant speed-up of processing time.

- Complex problems: They are very suitable for large-scale and complex scientific and data analysis issues.

- Simultaneous resource usage: Multiple processors and resources may be used simultaneously, significantly increasing performance.

- Large memory and allocation: Larger datasets require access to more processors, allowing for more significant memory allocation.

- Fast execution: Parallelism allows task distribution among various processors, considerably increasing results.

Disadvantages of Parallel Operating Systems

The following are some disadvantages of parallel os:

- Design complexity: Designing and maintaining parallel operating systems is challenging because one must coordinate between several processors.

- High Expense: Implementing parallel systems could be costly, as they require more hardware resources, better cooling, and, thus, higher energy costs.

- High power consumption: More processors and resources would correspondingly increase energy consumption; hence, they are inefficient.

- Maintenance: Installation of parallel systems is so complicated that they need constant supervision and maintenance.

What is the Distributed System?

A distributed system is a communicative network of mechanical computers working together to achieve a common goal. Today, these computers are often located in various geographical positions and interact with one another over the network while sharing resources. Each node comprises its memory, operates independently, and works collaboratively to process tasks and share data. Any distributed system should be scalable, fault-tolerant, and highly available, typically used in cloud computing, web service, and large-scale data processing applications.

Difference Between Parallel Operating System and Distributed System

Here are the differences between a parallel operating system and a distributed system:

| Parallel Operating System |

Distributed System |

| Multiple processors or cores within a single or closely linked machine and network. |

Multiple computers are made interoperable through a network. |

| A task is divided between multiple processors, with simultaneous execution on all processors. |

A task is done on various computers, proceeding independently. |

| It is faster because of more effective execution through parallelism. |

It's slower due to latency rates with communication and execution in isolation. |

| Collections of shared memory across processors. |

Every computer works in its local memory. |

| Tightly coupled systems, where associated processors can communicate close to each other. |

Loosely coupled systems where communication is via a network. |

| A global clock coordinates processes operated on processors. |

No-global clock, which applies synchronisation algorithms. |

| Examples are Supercomputers and high-performance Computing Clusters. |

Examples are Cloud computing, Google Cloud, and Amazon Web Services (AWS). |

| Communication among the processors is performed using shared memory. |

Communication would again occur through network links, such as these high-speed buses or a telephone line. |

Conclusion

In conclusion, parallel operating systems aim to enhance performance by breaking tasks into smaller parts that can be executed simultaneously. They play a crucial role in fields that require large-scale computations, including data mining, scientific simulations, and real-time systems. There are many types of parallel systems, including bare-metal and hosted hypervisors. Parallel systems facilitate process management by enhancing processing speed and resource utilisation. However, complexity, cost, and power consumption must be considered before implementation.

Learn Industry-Relevant Skills in College for a Strong Tech Career Start!

Explore ProgramFrequently Asked Questions

1. What are Some Examples of Parallel Systems?

Examples are supercomputers such as IBM Blue Gene and Beowulf clusters, where many processors work in parallel to perform complex calculations.

2. What is Parallel Operation in Computers?

Parallel operation is when multiple tasks are executed simultaneously across several processors to accelerate computations and improve performance.

3. What are the two types of parallel systems?

There are two types of parallel systems such as:

- Multiprocessor Systems: In a multiprocessor system, several processors have shared memory and are closely tied together in executing various tasks. Example: Supercomputers.

- Multicomputer Systems: Independent systems connected using a network through a communication medium, where each processor has its assigned memory. Example: Beowulf clusters.

.avif)